– By Jeff Errhabor

One way to take proactive cybersecurity to a higher level is to build the ability to predict with high precision potential cyberattacks that could be targeted at a cybersecurity team. The increasing number of non-traditional IT assets in technology systems (APIs, IoT devices, web browser extensions, dependent libraries), although designed to make work easier, is also increasing the number of vulnerability footprints and attack surfaces; a simple example that emphasizes this growing complexity is web browser’ extensions increasing the attack surface beyond just the web browsers’ vulnerabilities. This complexity is further compounded by the accelerating sophistication of cyber adversaries, who now leverage artificial intelligence to discover and exploit weaknesses with speed and scale that are unprecedented.

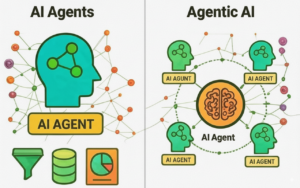

To maintain a proactive pace, cybersecurity professionals must embrace and utilize some form of AI technology. AI, AI Agents, and Agentic AI offer a transformative approach in proactively anticipating/predicting potential cyberattacks, as they have the ability to receive large feeds of data from diverse threat intelligence sources and make correlative decisions on those data that are relevant to the assets within an organization, while still doing all these autonomously and with little to no human intervention. Agentic AI even takes this further, as they can autonomously do well in real-time monitoring, executing goals without the need for detailed direction on how to act, understand context, reason, plan, prioritize, strategize, make independent adjustments, evaluate outcomes and tweak themselves all to achieve assigned goals. In this case, autonomously process and correlate vast streams of input data, including comprehensive IT asset inventories (hardware, software, software libraries, APIs, and personnel skill strengths), network traffic patterns and content, official OEM security bulletins, threat intelligence feeds, and open-source vulnerability databases, then generate precise predictions of targeted cyberattacks and activate early warning systems.

The question that then arises is how to secure such AI/Agentic AI systems from disclosing the vulnerabilities to unintended users.

Agentic AI and their role in predicting potential cyberattacks

As earlier described, Agentic AI are AI systems that are designed to act autonomously, and when compared to AI Agents, they can do more general tasks, as they can understand context, reason, plan, prioritize, adjust their strategy, and evaluate outcomes all with the intent of achieving assigned goals.

Agentic AIs are useful in predicting potential malware attacks, as they have the ability to:

- Integrate data sources such as asset inventory, and even complete gaps in the asset inventory through listening to network traffic and detecting the assets that create such traffic and their locations in the network. Hence, improving the completeness of the asset inventory, detecting hard-to-detect assets such as the libraries software depends on and browser extensions in web browsers, and even detecting harmful “assets” such as shadow IT and the use of shadow AI.

- Consume, analyze, and streamline a large amount of threat intelligence, especially those that are related to the assets in use in the environment, and even determine the perceived importance of the known and unknown assets based on network traffic analysis. Hence, producing a simplified list of key vulnerabilities and their severity ranking.

- Predict the attack methods based on a number of factors, such as known attack trends that have impacted similar industries, similar assets, and similar asset behavior.

- Continuously and automatically monitor, alert, and in real time predict cyberattacks even as new assets are introduced and new vulnerabilities are discovered and exploited in the wild.

- Recommending ways to prevent the malware attack before it occurs. In general, anticipating the attacks before they occur.

Having such powerful tools (agentic AI) in your environment also introduces huge risks, such as the agentic AI being exploited to reveal all the vulnerabilities to an unauthorized user or to an attacker.

Recommendations on How to Manage the Risk of Using Such a System (Agentic AI) for Predicting Potential Cyber-Attacks

Agentic AI are very powerful systems, and they have come to stay. They can be considered AI Agents; however, not all AI Agents are Agentic AI. A part of the architecture of Agentic AI can see them represented as a connection of many AI agents.

The complexity of Agentic AI, and having them within an enterprise’s network with access to databases, documents, inventories, etc., will necessitate cybersecurity professionals to study how Agentic AI communicates, their architecture, and how to limit the risk associated with their usage. The same was done when IoT became a thing of concern; cybersecurity professionals understood their limitations and weaknesses and designed their network to accommodate them with minimal risk. A similar approach was made when APIs became pervasive; API security and OWASP top 10 API became a reference. There is now the OWASP GenAI Security Project and OWASP Top 10 for LLM and Generative AI.

To secure Agentic AI systems, consider the following recommendations:

- Ensure there is a robust authentication and authorization system built into the Agentic AI systems; not just anyone should be able to send a prompt to the Agentic AI to request for the list of vulnerabilities.

- Ensure Agentic AI systems and all AI systems used within an organization are designed and built with risk-awareness and guardrails from the very onset (anticipate adversarial manipulation) [1]. An example to show this is possible is DeepSeek, which censors information that is critical of or sensitive to the Chinese government, as the model was built with those guardrails.

- Understand the direction of the traffic from the Agentic AI (east-west traffic, north-south traffic, etc.) to know what information leaves the organization and what information stays within the organization; similar to how network security professionals block and allow certain network ports and protocols to control network traffic.

- Since vulnerable agents in a network of agents can pose risks, invest time in understanding the protocols used in communication among agents, such as the Google Agent to Agent (A2A) protocol, Anthropic Model Context Protocol (MCP), Agent Network Protocol (ANP), Agent Connect Protocol (ACP), AGNTCY, etc. [2].

- Build controls for Agent-to-Agent security: Implement controls to ensure one AI agent cannot be exploited in a network of AI Agents. There is also research on Zero Trust Models in Agentic AI [3].

- Understand the role of Humans in the Loop (HITL) in AI, and do not depend solely on the output from Agentic AI without doing some validation, optimization and retraining.

- Consider studying the following white papers, frameworks or research on Agentic AI:

- Agentic AI: Threats and Mitigations by OWASP GenAI Security Project.

- NIST AI 100-1: Artificial Intelligence Risk Management Framework (AI RMF 1.0), A robust framework that has similar guidelines such as those in the NIST Guidelines in the NIST Cybersecurity Framework and Risk Management Framework [4]

- OWASP Top 10 for Large Language Model Applications.

- OWASP Machine Learning Security Top Ten.

- Participate and learn from the OWASP GenAI Security Project: https://genai.owasp.org/

- Proof of concepts in secure delegation in Autonomous Agentic AI designed to ensure user intent is verified without disrupting the pace at which AI Agents work, such as “Agentic JWT: A Secure Delegation Protocol for Autonomous AI Agents”

- Frameworks for implementing Zero Trust in Agentic AI, such as “A Novel Zero-Trust Identity Framework for Agentic AI: Decentralized Authentication and Fine-Grained Access Control”

References

[1] C. Akiri, H. Simpson, K. Aryal, A. Khanna, and M. Gupta, “Safety and Security Analysis of Large Language Models: Risk Profile and Harm Potential,” 2025, arXiv. doi: 10.48550/ARXIV.2509.10655.

[2] I. Habler, K. Huang, V. S. Narajala, and P. Kulkarni, “Building A Secure Agentic AI Application Leveraging A2A Protocol,” 2025, arXiv. doi: 10.48550/ARXIV.2504.16902.

[3] K. Huang et al., “A Novel Zero-Trust Identity Framework for Agentic AI: Decentralized Authentication and Fine-Grained Access Control,” 2025, arXiv. doi: 10.48550/ARXIV.2505.19301.

[4] E. Tabassi, “Artificial Intelligence Risk Management Framework (AI RMF 1.0),” National Institute of Standards and Technology (U.S.), Gaithersburg, MD, NIST AI 100-1, Jan. 2023. doi: 10.6028/NIST.AI.100-1.